A/B testing is a popular email marketing technique that consists of deploying two emails with slight variations and splitting your subscriber list randomly. The goal is to see what variation produces better results. A/B tests usually consist of different content, a different subject line, or a different from/reply-to address. A/B tests help take the guessing game out of figuring out what’s working in your email marketing.

How to A/B test

Before sending your email you will need to decide what you are testing. Only test one difference at a time. If you change more than one element in your email, you won’t know what element was responsible for the change in opens or clicks. Make sure you are comparing the same variable in each email. Then follow up by optimizing your emails, repeating another test to reduce variables and receive solid facts to help your marketing.

What to expect using A/B testing in WordFly:

- A/B testing is available for standard email campaigns. Automated triggered campaigns do not have an A/B testing option.

- Test both versions of your A/B test under the email campaign's Testing tab.

- The assigned subscriber list will automatically split randomly at the time of deployment. You will not know who is going to receive each campaign.

- Both subscriber lists deploy at the same time after the list is split. As with any campaign, each subscriber mailing processes the mailing queue one at a time.

- Label your A/B test campaigns and use Reporting > Compare Campaigns to select this label and compare A and B results.

- Google Analytics tags will track each version separately through the tag utm_content. This tag will be set to version_A and version_B respectively. Learn more.

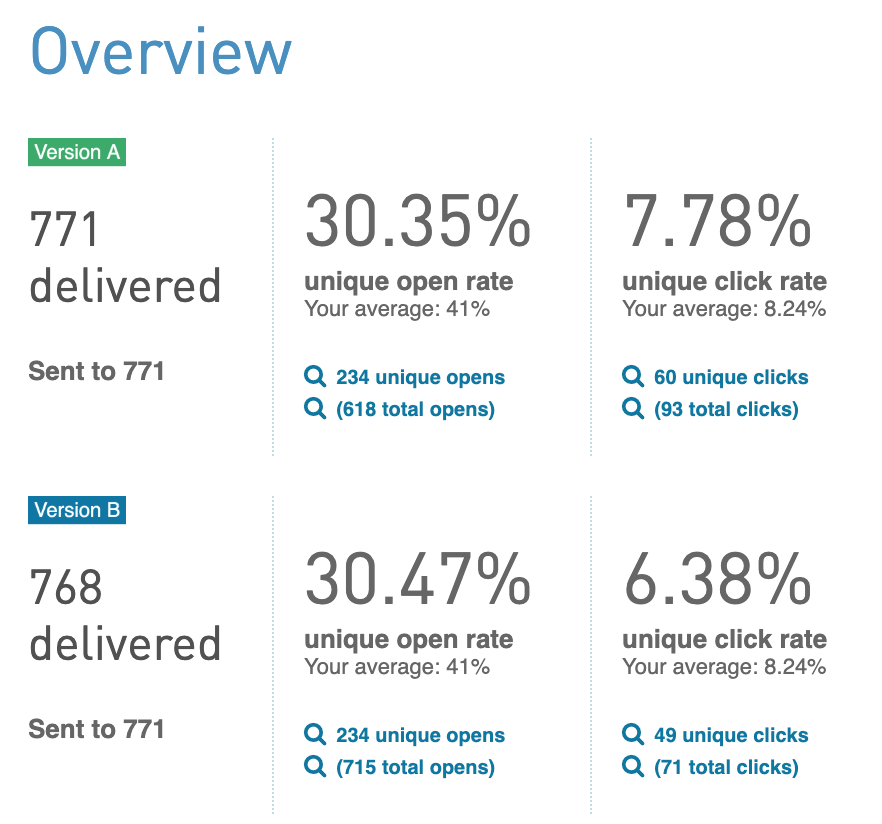

- Review A/B results in the reporting section.

- A/B tests send to the entire subscriber list. Conduct your tests to a small segment of your list to determine the best results and then send another email to the remainder of your list.

Create an A/B campaign

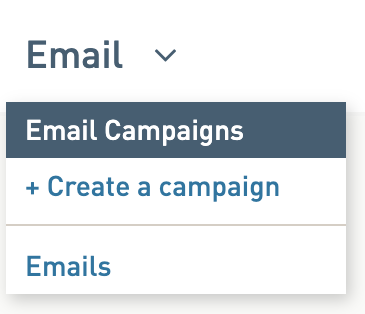

1. Go to Emails > Create a campaign

2. On the Settings tab, from the first drop-down select Standard.

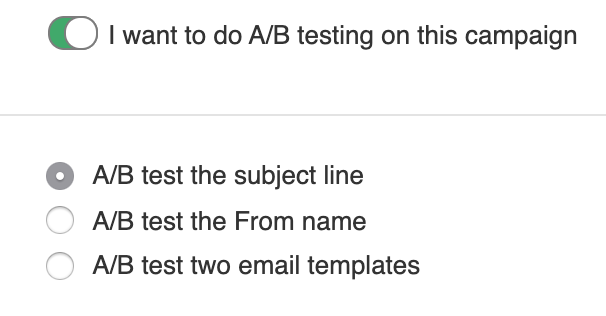

3. Check the box for A/B testing and select the type of test

4. Save the Settings tab and finish your email campaign setup. Send your A/B campaign.

If A/B testing two emails:

- Under the Email tab of your email campaign you will select Version A and Version B

- In most cases you will duplicate Version B since your changes won’t be very different from the original email

- Tip: Keep your test variable confined to one change for better tracking

5. Go to Reporting > Dashboard to review your A/B campaign results.

A/B testing types

Here are ten examples of A/B tests you can try in WordFly:

| A/B TESTING IDEA | DETAILS |

| From Name | Use "A/B test from name" to see how different names perform |

| Name Personalization | Use either "A/B test subject line" or "A/B test two emails" for this one. You will use data fields to create personalization. |

| Subject line | Use "A/B test subject line". Put in separate subject lines. |

| Special characters in subject line | Use "A/B test subject line". Put in separate subject lines and insert special characters. |

| Animated gifs | Use "A/B test two emails" and insert different animated gifs in each email. Learn more about adding animated gifs. |

| Embedded video | Use "A/B test two emails" and insert an embedded video into one email and no video in the other. Learn more about adding video to your email. |

| Button text and color | Use "A/B test two emails" and adjust the styles in each email to see how one works better. Learn more about global style updates in your emails. |

| Button placement | Use "A/B test two emails" and adjust the placement of your buttons in each one. Does a button placed higher in the email generate more engagement and conversions than placed lower in the email? |

| Postcard vs Newsletter design | Use "A/B test two emails" and adjust the designs. One design could have more content than the other. |

| Time of day or week | This is the only test that is more about long term testing. There is not an option in WordFly to send at various times of day or week. The idea here is to send one A/B test at one time in a week and the next week you can try another A/B test at a different time or day. See how the results vary. Did one have better opens/clicks than the other? You can even do this same type of analysis without using A/B testing. |